At Adobe, I'm an Applied Scientist working to improve GenAI image & video systems. I earned my PhD in Experimental Psychology from UC San Diego, under Dr. Judith Fan @ The Cognitive Tools Lab.

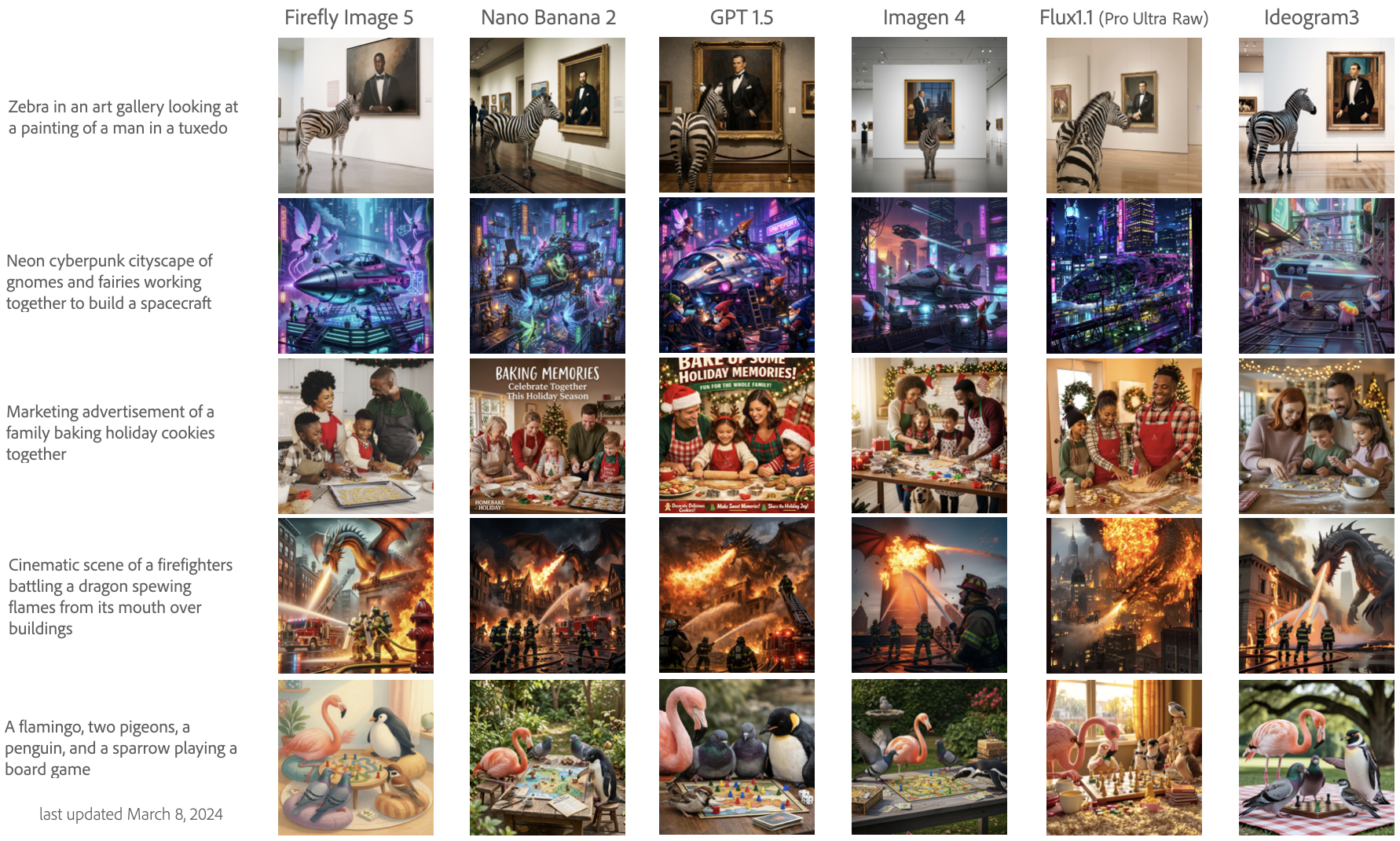

I lead the Scientific Evaluation Team's efforts in evaluating state-of-the-art imaging and image editing technologies like Adobe Firefly. My research statistically measures improvements & regressions in model behavior related to: text-to-visual & visual-to-visual systems, semantic editing, object recognition, and user intentions

I do this by developing large-scale benchmarks (e.g., 1K-50K assets each with 3-5 annotations), which enables me to identify market trends in artist and GenAI behavior. These insights are used to support product managers, data analytics, and model & engineering teams on how to develop the next generation of creative tools. I also work with a globally and culturally diverse team of professional photographers & editors, graphic designers, and video creators/editors to better understand user needs and human-AI collaboration.

Collaborating with AI Ethics teams is deeply integrated into my work to ensure that models do not create or enable the creation of harmful content.

My dissertation research evaluated how user intentions shift visual production behavior and downstream user interpretations. I tested this by generating & analyzing large-scale datasets of drawings, diagrams, and data visualizations. In other words, I studied how people make pictures. Now I study how GenAI models make pictures.

research highlights

My current research evaluates text-to-image, image-to-image, image-to-video, and image editing via instructional prompting, masking, layer segmentation, and more. By measuring state-of-the-art model behavior and gathering insights from professional artists, my work helps to identify where the market is today as well as helps product managers define the market of tomorrow. In my day-to-day, I work with modeling and engineering teams to design custom experiments to collect large-scale datasets comparing different models and analyzing the technical quality and production readiness of outputs.

For developing culturally-specific GenAI models, I create and closely work with teams of artists with extensive lived experience from specific cultures and countries. This humans-in-the-loop collaboration ensures that our models do not contribute to erasure or inappropriate Westernization of non-Western cultures.

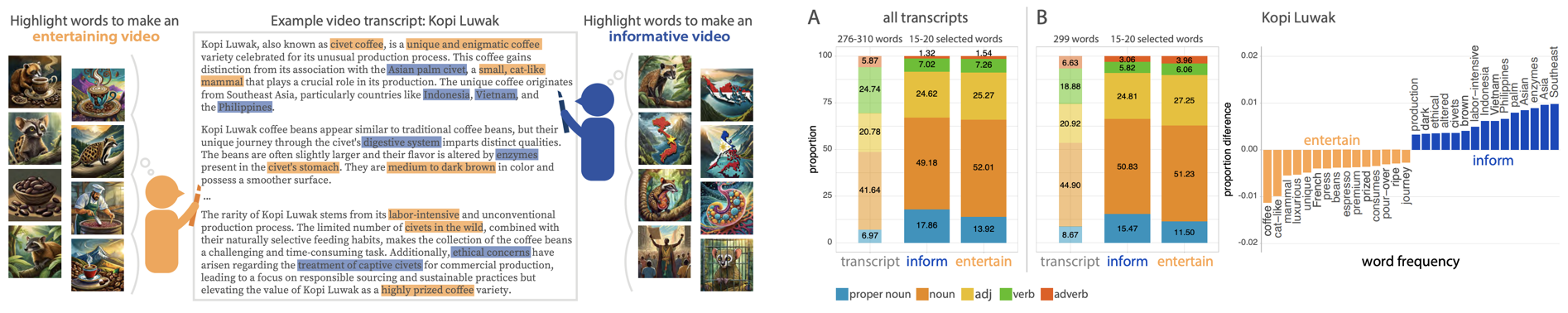

Occasionally, I also conduct qualitative user research. At Adobe Research, I interviewed filmakers, video editors, and musicians to develop GenAI video editing parameters based on users' creative workflows. These insights helped frame large-scale annotation experiments that eventually fine-tuned different model parameters. For example, our Creativity & Cognition 2024 paper evaluated how people (N >800) and LLM models select B-Roll to enrich videos depending on their goals to make entertaining or informative content.

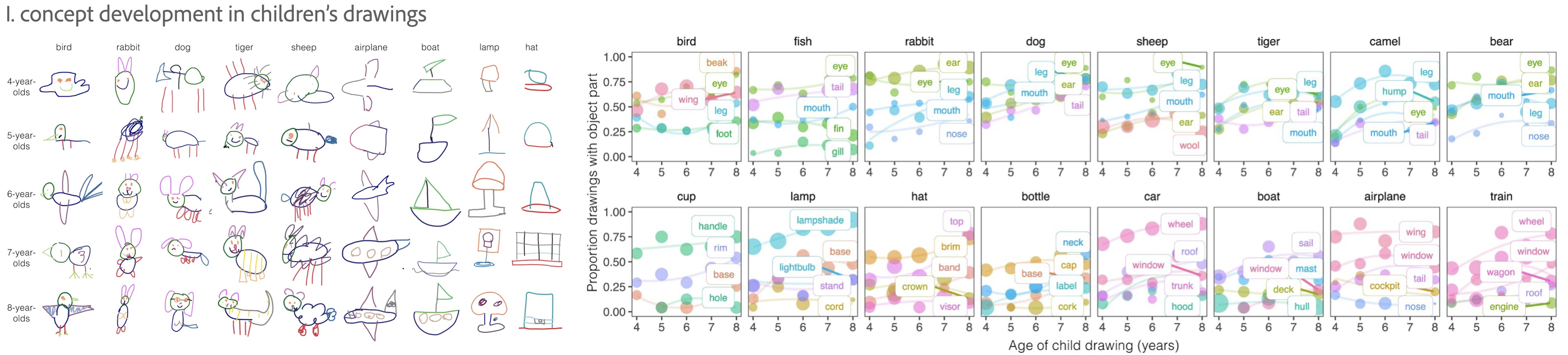

During my PhD, I specialized in large-scale crowdsourcing methods for collecting and analyzing sketches. Datasets like these can be used for a wide array of research domains such as computer vision, attention, memory, semantic segmentation of objects, and information prioritization based on user intentions. See my Publications below to access those datasets, such as our Nature Communications 2024 paper on evaluating concept development in children through their drawings.

-

journal articles

- *Yang, J., *Huey, H., Lu, X., and Fan, J.E. (submitted). Visual communication of object concepts at different levels of abstraction.

-

Mukherjee, K., Huey, H., Stoinski, L.M., Hebart, M.N., Fan, J.E., and Bainbridge, W.A. (2025).

Drawings of THINGS: A large-scale drawing dataset of 1,854 object concepts.

Behavior Research Methods.

Paper Publisher's Page -

Brockbank, E., Verma, A., Lloyd, H., Huey, H., Padilla, L., and Fan, J.E. (2025).

Evaluating convergence between two data visualization literacy assessments.

Cognitive Research: Principles and Implications.

Paper Publisher's Page -

Huey, H., Leake, M., Aneja, D., Fisher, M., and Fan, J.E. (2024).

How do video content creation goals impact which concepts people prioritize for generating B-roll imagery?

Creativity and Cognition.

Chicago, Illinois: Association for Computing Machinery

Paper Poster -

Long, B., Fan, J.E., Huey, H., Chai, R., & Frank, M. C. (2024).

Parallel developmental changes in children's production and recognition of line drawings of visual concepts.

Nature Communications.

Paper Publisher's Page -

Huey, H., Lu, X., Walker, C.M., & Fan, J.E. (2023).

Explanatory drawings prioritize functional properties at the expense of visual fidelity. Cognition.

Paper Poster Publisher's Page -

*Huey, H., *Jordan, M., & Dillon,. M.R. (2023).

Shortest path problems on different geometric surfaces: Reasoning about linearity through development. Developmental Psychology.

Paper Publisher's Page -

Aboody, R., Huey, H., & Jara-Ettinger, J. (2022).

Preschoolers decide who is knowledgeable, who to inform, and who to trust via a causal understanding of how knowledge

relates to action. Cognition.

Paper Publisher's Page -

Jara-Ettinger, J., Floyd, S., Huey, H., Tenenbaum, J.B., & Schulz, L. (2019).

Social pragmatics: Four and five-year-olds rely on commonsense psychology to

resolve referential ambiguities. Child Development.

Paper Publisher's Page -

peer-reviewed conference proceedings & posters

-

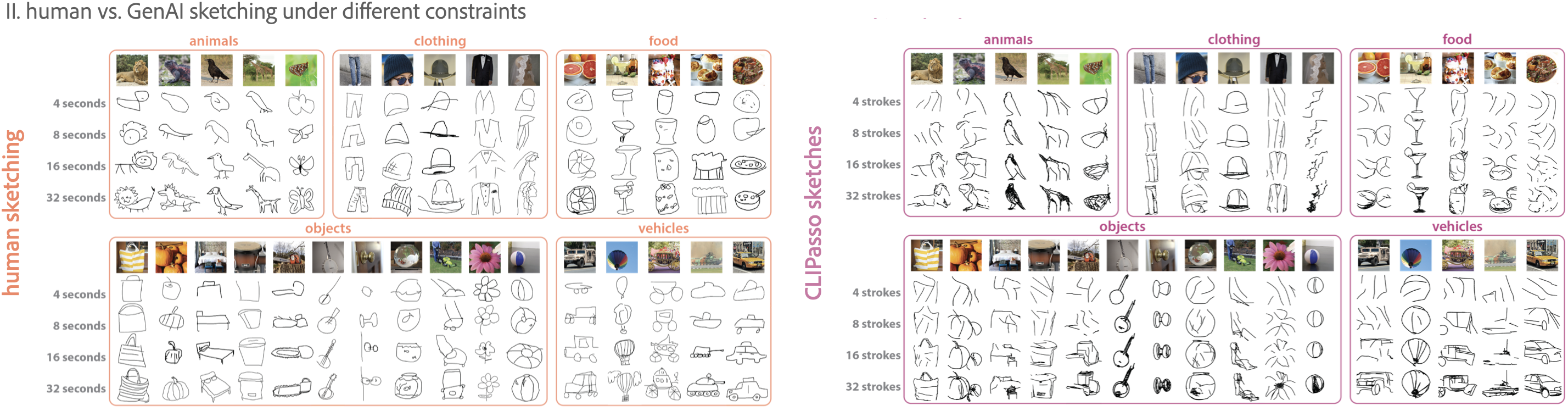

*Mukherjee, K., *Huey, H., *Lu, X., Vinker, Y., Aguina-Kang, R., and Fan, J.E. (2023).

SEVA: Leveraging sketches to evaluate alignment between human and machine visual abstraction.

Advances in Neural Information Processing Systems, Datasets and Benchmarks Track.

New Orleans, Lousiana: NeurIPS

Paper Github -

*Huey, H., *Oey, L.A., Lloyd, H.S., and Fan, J.E. (2023).

How do communicative goals guide which data visualizations people think are effective?

Proceedings of the 45th Annual Conference of the Cognitive Science Society.

Sydney, Australia: Cognitive Science Society.

Paper Poster -

*Mukherjee, K., *Huey, H., *Lu, X., Vinker, Y., Aguina-Kang, R., and Fan, J.E. (2023).

Evaluating machine comprehension of sketch meaning at different levels of abstraction.

Proceedings of the 45th Annual Conference of the Cognitive Science Society.

Sydney, Australia: Cognitive Science Society.

Paper Poster -

Lloyd, H.S., Huey, H., Brockbank, E., Padilla, L., and Fan, J.E. (2023).

What is graph comprehension and how do you measure it?

Proceedings of the 45th Annual Conference of the Cognitive Science Society.

Sydney, Australia: Cognitive Science Society.

Abstract Poster -

*Huey, H., *Long, B., Yang, J., George, K., and Fan, J.E. (2022).

Developmental changes in the semantic part structure of drawn objects.

Proceedings of the 44th Annual Conference of the Cognitive Science Society.

Toronto, Canada: Cognitive Science Society.

Paper Poster -

Nagabandi, M., Yang, J., Huey, H., Fan, J.E. (2022).

Decomposing objects into parts from vision and language.

Proceedings of the 44th Annual Conference of the Cognitive Science Society.

Toronto, Canada: Cognitive Science Society.

Abstract -

Huey, H., Walker, C.M., & Fan, J.E. (2021).

How do the semantic properties of visual explanations guide causal inference?

Proceedings of the 43rd Annual Conference of the Cognitive Science Society.

(Virtual Meeting) Vienna, Austria: Cognitive Science Society.

Paper Poster Video - Huey, H., Loncar, N., Jordan, M., & Dillon, M.R. (2019). A tale of paths between two points: Children's identification of linearity on different geometric surfaces. Poster presented at the Biennial Meeting of the Society for Research in Child Development 2019.

- Loncar, N., Huey, H., & Dillon, M.R. (2019). Infants fail to categorize forms by kinds. Poster presented at the Biennial Meeting of the Society for Research in Child Development 2019.

-

Aboody, R., Huey, H., & Jara-Ettinger, J. (2018). Success does

not imply knowledge: Preschoolers believe that accurate predictions reveal

prior knowledge, but accurate observations do not.

Proceedings of the 40th Annual Conference of the Cognitive Science Society.

Paper -

Aboody, R., Huey, H., & Jara-Ettinger, J. (2017).

Success does not imply knowledge: Preschoolers believe that accurate predictions reveal prior knowledge,

but accurate observations do not.

Poster presented at the Cognitive Development Society's Bi-Annual Meeting 2017.

Poster -

workshop presentations

-

Mukherjee, K., Huey, H., Rogers, T., & Fan. J.E. (2022).

From Images to Symbols: Drawing as a Window into the Mind.

Proceedings of the 44th Annual Conference of the Cognitive Science Society.

Toronto, Canada: Cognitive Science Society.

Co-organizer of workshop & co-designer of website.

Website Workshop Overview -

Pitt, B., Huey, H., Jordan, M., Hart, Y., Dillon, M.R.,

Bottini, R., Carstensen, A., Boni, I., Piantadosi, S., Gibson, E., Marghetis, T.,

Holmes, K.J., Star-Lack, M., & Chacon, S., (2022).

Dimensions of Diversity in Spatial Cognition: Culture, Context, Age, and Ability.

Proceedings of the 44th Annual Conference of the Cognitive Science Society.

Toronto, Canada: Cognitive Science Society.

Invited speaker.

Workshop Overview